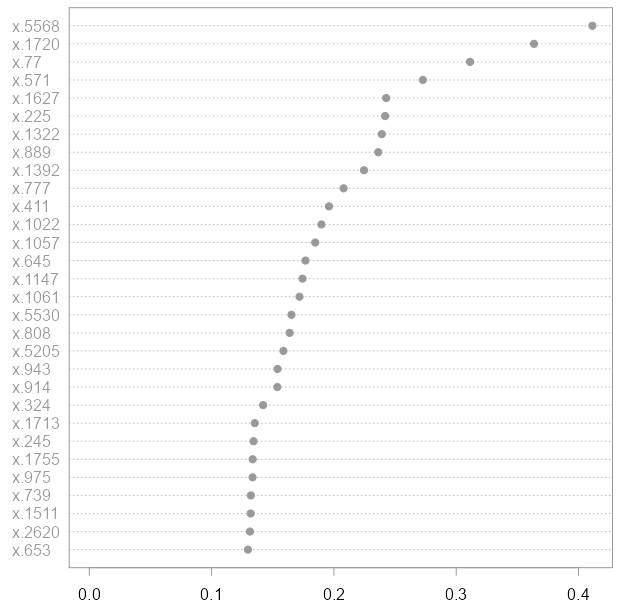

We get feature_importance: np.array().Īfter normalized, we get array (),this is same as clf.feature_importances_.īe careful all classes are supposed to have weight one. Try calculate the feature importance: print("sepal length (cm)",0) # Avoid dividing by zero (e.g., when root is pure) Importances /= nodes.weighted_n_node_samples Right.weighted_n_node_samples * right.impurity) Left.weighted_n_node_samples * left.impurity. the significance test suggested on the random forest website. Finally - we can train a model and export the feature importances with: Creating Random Forest (rf) model with default values rf RandomForestClassifier () Fitting model to train data rf.fit (Xtrain, ytrain) Obtaining feature importances rf.featureimportances. Node.weighted_n_node_samples * node.impurity - learn a random forest as a classification/regression model to predict Y from X1.,Xp. Importances = np.zeros((self.n_features,))Ĭdef DOUBLE_t* importance_data = importances.data Feature importance in random forest does not take into account co-dependence among features: For example, considering the extreme case of 2 features both strongly related to the target, no matter what, they will always end up with a feature importance score of about 0.5 each, whereas one would expect that both should score something close to one. """Computes the importance of each feature (aka variable)."""Ĭdef Node* end_node = node + self.node_countĬdef np.ndarray importances We get compute_feature_importance:Ĭheck source code: cpdef compute_feature_importances(self, normalize=True): The random forest technique can handle large data sets due to its capability to work with many variables running to thousands. The decision tree in a forest cannot be pruned for sampling and hence, prediction selection. A brief description of the above method can be found in "Elements of Statistical Learning" by Trevor Hastie, Robert Tibshirani, and Jerome Friedman. Random forest is a combination of decision trees that can be modeled for prediction and behavior analysis. Its important that these values are relative to a specific dataset (both error reduction and the number of samples are dataset specific) thus these values cannot be compared between different datasets.Īs far as I know there are alternative ways to compute feature importance values in decision trees.

Its the impurity of the set of examples that gets routed to the internal node minus the sum of the impurities of the two partitions created by the split. The error reduction depends on the impurity criterion that you use (e.g. You traverse the tree: for each internal node that splits on feature i you compute the error reduction of that node multiplied by the number of samples that were routed to the node and add this quantity to feature_importances. You initialize an array feature_importances of all zeros with size n_features. The usual way to compute the feature importance values of a single tree is as follows:

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed